In this blog post, I discuss one of the most important and necessary elements of any decent marketing effort: an SEO Audit. I will explain the SEO Audit step-by-step so that you can understand the process well and I will also recommend various free and paid tools you can use to help.

Obviously, it should be noted, there is no single method of conducting a decent SEO Audit, so try to keep an open mind and be willing to learn from other market professions to incorporate interesting aspects of their methodology into your own.

What is an SEO Audit?

An SEO Audit consists of the step-by-step analysis of each and every one of the various SEO factors that affect the positioning of your website on search engine result pages (SERPs), and therefore allow you to determine areas to improve in the future with the objective of ranking higher on the SERPs.

As mentioned above, in SEO there is no single valid way of doing things, meaning each professional/agency has its own method of SEO analysis. Nevertheless, an SEO Audit should always cover a number of key factors that affect positioning. These key factors are considered by almost all SEO professionals as important positioning factors and, therefore, should be covered within your audit/analysis.

How to do an SEO Audit: step-by-step

We can group the Audit process into 5 phases or major blocks of analysis:

- Indexability/Tracking

- Content/Keywords/CTR

- Inbound Links/Domain Authority

- Performance/Adaptability/Usability

- Code and Labels

Block 1 of analysis. Indexability and Tracking

Phase 1, Indexability and Tracking, comprises the following important points that must be analysed:

1.1 Indexability

Indexability is the analysis of the URLs on your website that are indexed. That is to say, it is the analysis of the URLs that are shown on the search engine results pages e.g. Google.

This factor is very important. If a website wants any organic traffic then its going to want top have its relevant URLs indexed, otherwise potential readers/customers are going to have no way of finding you. Thus, the first step of the audit is to ensure the website is optimised to ensure it is ranked for the search terms relevant to your business.

"A website must always have its relevant URLs indexed"

It's most important that the relevant URLs are indexed, and the non-relevant URLs are not.

By non-relevant URLs, I mean all the pages on a website that do not provide relevant information for particular searches, offer poor content (i.e. thin content) or have duplicate content with respect to other URLs on your website. Google prefers not to track irrelevant URLs, because that saves tracking time that could be used to track other relevant URLs.

In addition, in the case of very large website (about 10 thousands or more URLs), Google may have trouble seeing all the URLs and indexing them, due to the limited tracking budget or crawl budget. So, this makes it even more necessary to spend time analysing all the potential indexing problems and solving them once we discover the reason why relevant URLs are not being crawled and indexed.

Reasons why a URL may not be indexed

- A meta robots noindex tag. You can specifically place a no-index tag into a webpage, meaning the search engines will track the content by won't index it.

- Disallow directive in robots.txt. This indication in the robots file tells search engines what URLs on a website should not be crawled.

- URLs with duplicate / irrelevant / poor content. Sometimes, pages on a website may not be indexed if they are considered by the search engine to be duplicate, irrelevant, or low quality, even though they have no no index tag or disallow directive in the robots file.

- Orphan URLs. An orphan URL is a URL with no internal links, meaning Google may skip that URL when it comes to tracking and indexing. This doesn't alway happen, but in general if a URL is important to you and you want to index and position it, you should link to it internally.

How to analyse whether a page is being indexed or not?

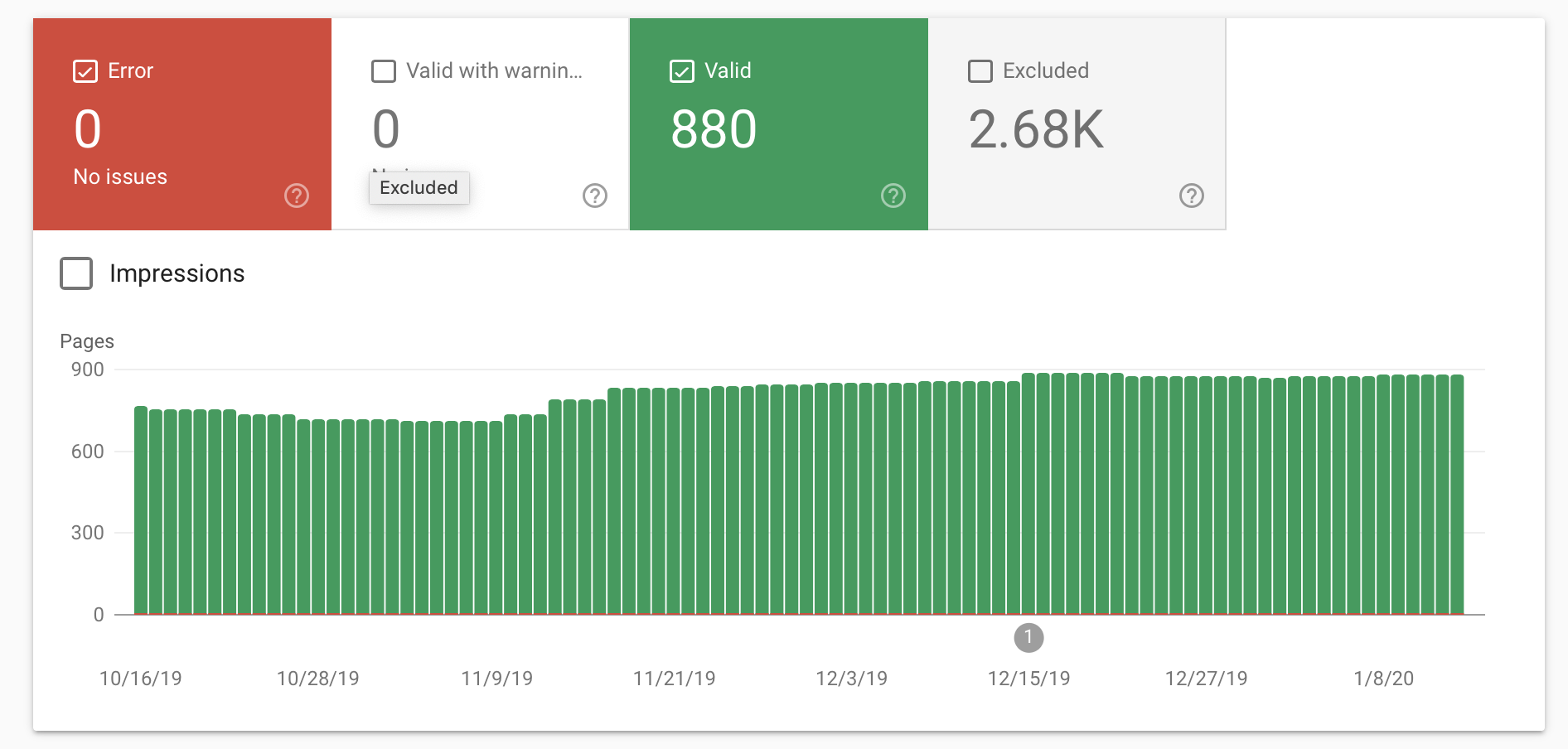

You can see whether or not URLs are indexed or non-indexed, or have other indexing problems, with Google Search Console. The Google Search Console is an excellent tool due to its simplicity of use and detail when it comes to detecting indexing problems and offering solutions.

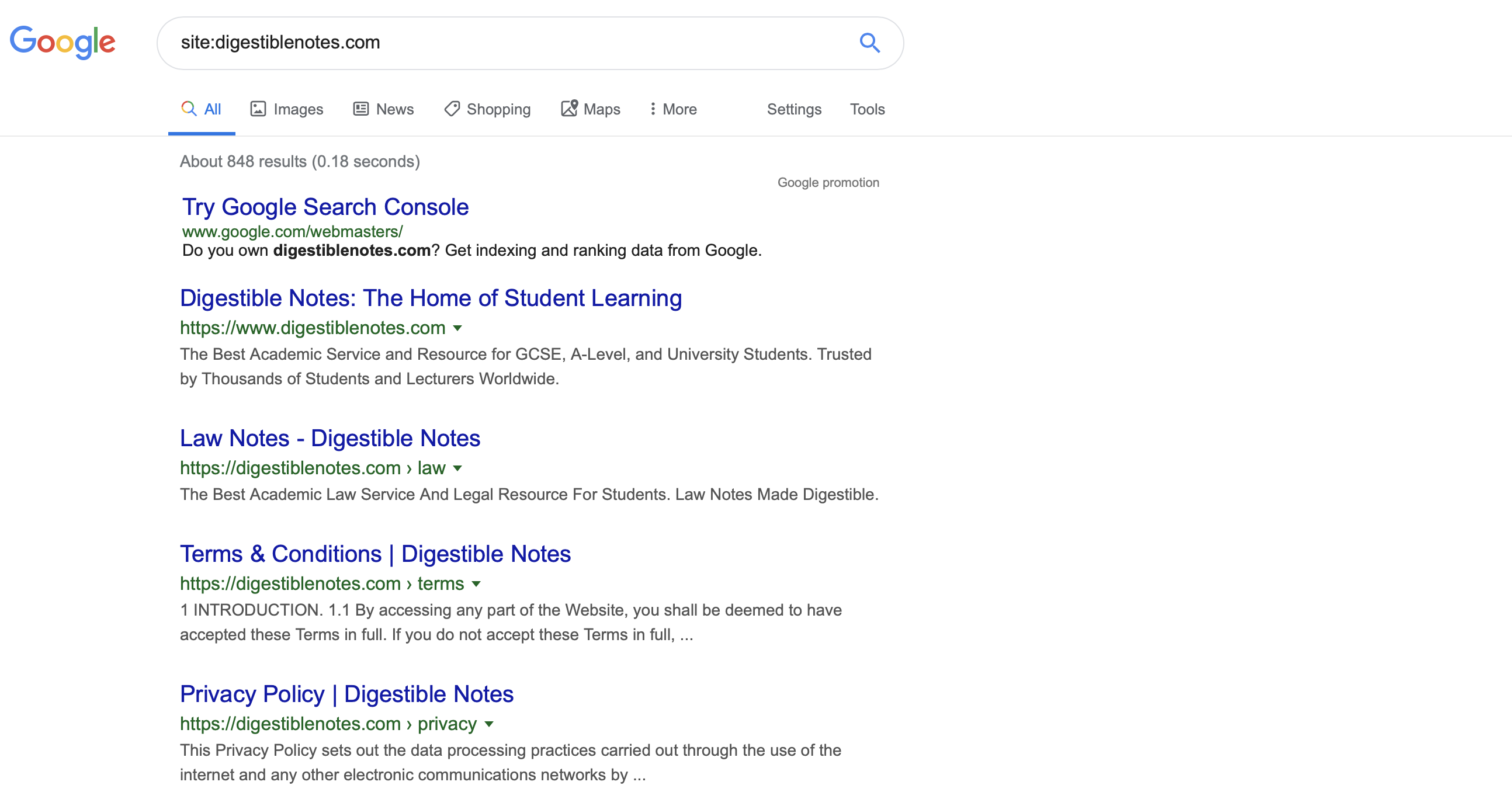

Another option to see the indexed URLs of a site is to search site:domain in Google. This will allow you to see the (approximate) number of URLs for that domain shown on Google and you can also see how SERPs or search results boxes are displayed to users.

1.2 Robots

As mentioned previously, the robots.txt file is used to tell search engines which parts of a website should be crawled and which pages should be ignored. This is done using the disallow directive. You can also use an allow directive to allow search engine bots to crawl certain URLs as an exception to pages that are currently disallowed.

In the robots file you can also define the exact location of the sitemap to help search engines understand the structure of your website.

In your SEO Audit, then, be sure to verify whether or not there is a robot file and that it is correctly configured to disallow/allow the URLs you want.

How to view and analyse your robots.txt file?

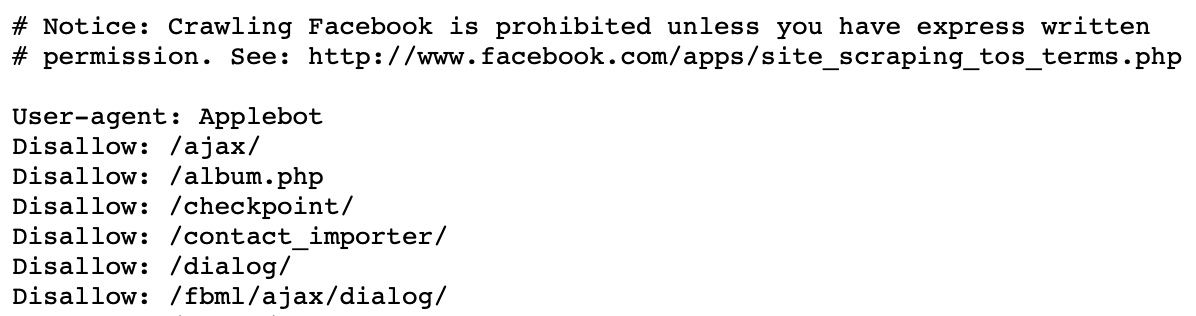

You can see the robots.txt file of any web page by typing the URL domain/robots.txt. It is a file that is visible to both search engines and users, so you can analyse it easily even if you don't have access to the website's code. This is part of Facebook's robots.txt file:

1.3 Sitemap

The XML sitemap file must contain a list of all the indexable URLs on the website to help Google track, crawl, and index your site on the search pages. If a Google bot can avoid errors when indexing and speed up the process of crawling your website, you will optimise your crawl budget, meaning more of your site will be visible on the SERPs.

Therefore, it's recommended that all websites, especially the most complex website with lots of URLs, have a sitemap and add it to the search consul so the search engines can read it.

The relevant URLs that you want to index must be on the sitemap, but those that are irrelevant should be left off.

There are several ways to organise the sitemap. It can be classified by content types (pages, products, etc.), listed by date, by priority, by most recently published, etc. The important thing is that it is understandable for the search engines and that it has no errors in the list of URLs shown.

How to view and analyse the sitemap file?

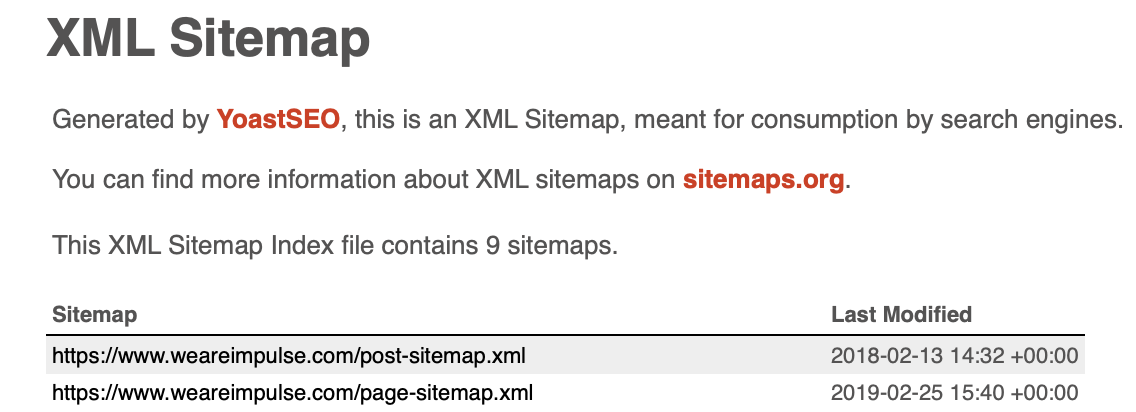

You can see the main sitemap file by typing in the URL domain/sitemap_index.xml. Here you will find an index of all the sitemaps you have for the domain. In the example below, each of the secondary sitemaps link to a list of URLs of that type (one links to a list of posts and the other links to the list of pages)

Block 2 of analysis. Content/Keywords/CTR

2.1 Content

Content is a kay factor with regards to SEO, so it should be thoroughly analysed to ensure you are maximising your SEO efforts.

Some of the most important aspects of SEO relate to the quality of the content, the value each page provides, how long content keeps people on the page, whether or not the page includes duplicated text from other pages, and URL cannibalisation. In addition, it is also important to consider keywords, internal links, 404 errors, broken links, redirects, and URL & image optimisation.

Value

The value that the content brings a website users is the level of satisfaction that a user obtains when consuming your content, especially to the extent that it meets the specific needs of their online search.

When the content is perceived as valuable, it usually has a positive impact on the website's SEO, such as keeping that user on your site, increasing the likelihood the content is shared, and having more post engagement.

The value of content is, to a certain extent, quite subjective, because there is no tool that directly measures the value of a URL. However, you can measure factors such as time spent on the page, bounce, links, shares, positive comments, etc., to determine how valuable a piece of content is.

Retention and loyalty

The degree of interest and satisfaction that your content provides to users will determine how long they will stay on your page. The more value you bring to the site user, the more chances that he or she will stay on your page and even come back on another occasion, generating more recurring visits in the future and decrease bounce rate too.

Google Analytics is a great way to analyse this sort of information, which gives you exact data on how users interact with your content (time spent, bounce rate, etc.).

Duplicate Content

Ensuring content is not duplicated from other internal website pages or from external pages from across the web is an important SEO factor. Google considers duplicate content undesirable, so it prefers not to crawl website URLs that have copied other pages' content, meaning that content won't be shown in the search result pages.

Duplicate content may sometimes be accidentally, but it may also be due to:

- Pages that have grouped URLs into areas of similar themes (category pages, tags, etc.)

- Automatic paginations in a list of elements that do not fit on a single page

- URLs with parameters of any kind that are the same as the original URL and are not redirected

- Not redirecting users from different versions of the domain to a single page. For example, if your page has both http and https versions, then you should redirect the users to the secure version of the page.

Solving duplicate content:

- Generate different content on all your pages and target different keywords

- Unindex the duplicate URLs if they don't need to be indexed on Google, since they will be targeting the same keyword (leading to URL cannibalisation)

- Use canonical URLs to tell the search engine which is the main URL that should be indexed and take priority among a set of similar URLs

- Use the next and previous attributes in page URLs

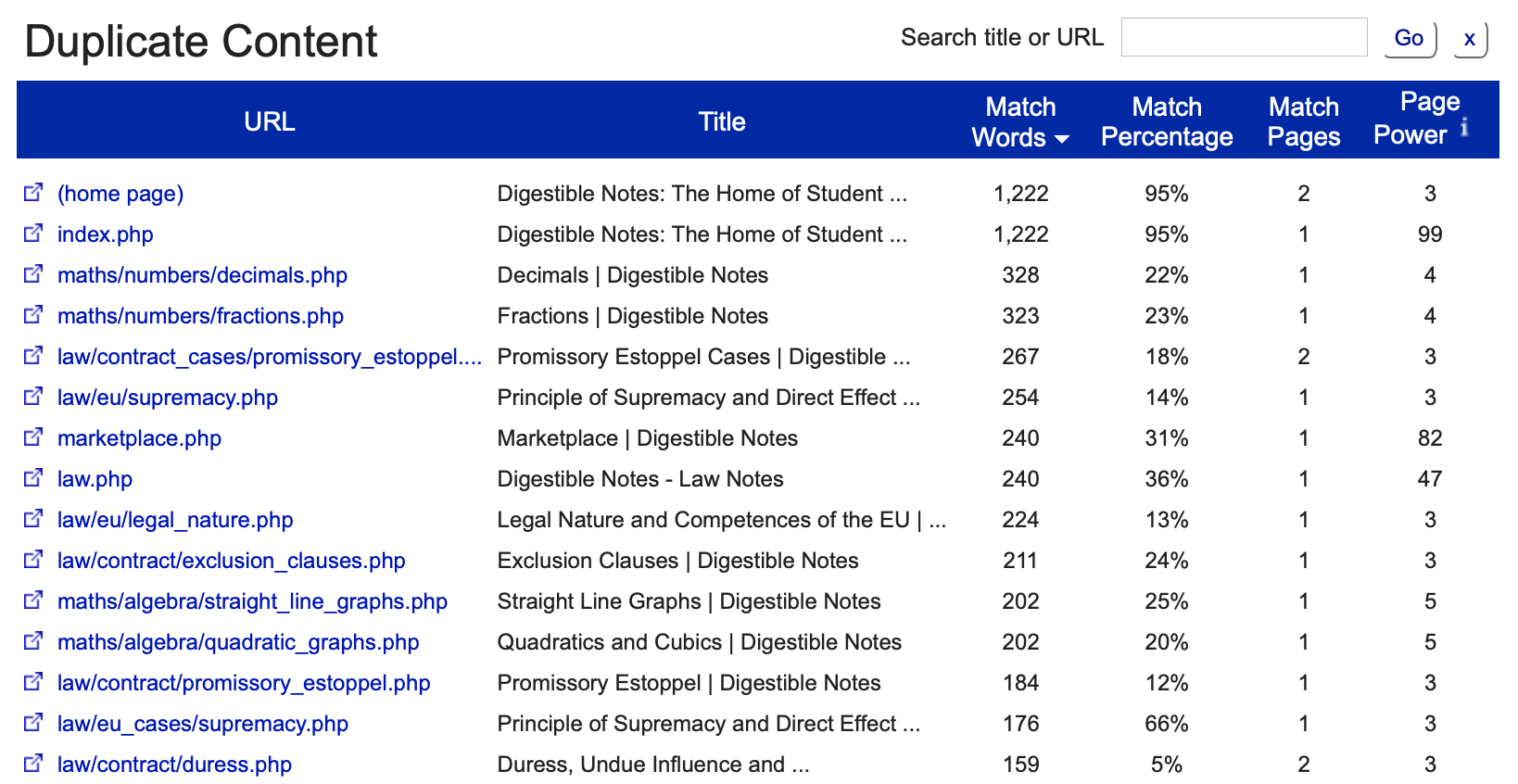

With the free Siteliner tool you can easily analyse whether or not there is duplicate content on your website.

Cannibalisation

It's really important that there is no more than 1 URL focused on a particular search topic on your website. That is, each keyword you target must be targeted on separate pages so Google knows what URLs they should serve for each search intention, improving your ranking.

Furthermore, if you create one large page that can build credibility, shares, and traffic, instead of dispersing your content across several pages, you will find it a lot easier to achieve your positioning goals.

In my article on Google Search console, I explain how you can detect if there is any cannibalisation on your site.

Content Optimisation (tags, density, etc.)

This section is about ensuring the structure of your website is properly optimised.

One of the key aspects of this is ensuring that you are using heading tags <h>, and they are used in a logical order. For example, you should use h1 tags for the main titles, h2 tags for subtitles, and h3 tags for headings within your page.

Within your header tags it is advised that you place keywords and other relevant words in them, as this helps Google to understand what your page is all about.

The <title> tag is also very important as Google deems it highly relevant contextual information about what is on your page. It is also used by Google to display your website page information on the search engine results page (SERP).

In addition, analyse the density of the keywords on the page. This means you should look at the number of times a key expression is written in comparison to the total word count of the page content. Your keyword density shouldn't be too high though otherwise the search engine may penalise you for something known as 'keyword stuffing'.

You can also add rich content tags or Rich Snippets from Schema.org, which optimise the appearance of your website on the SERP, increasing your click through rate (CTR). This is mentioned in more detail later in the post.

Finally, remember that Google can only read the content of your page that has been written in text format. Images, videos, audio, and other multimedia elements cannot be read by Google. This is not to say that you shouldn't be using them, but try to keep as much of your information as readable as possible for Google's purposes.

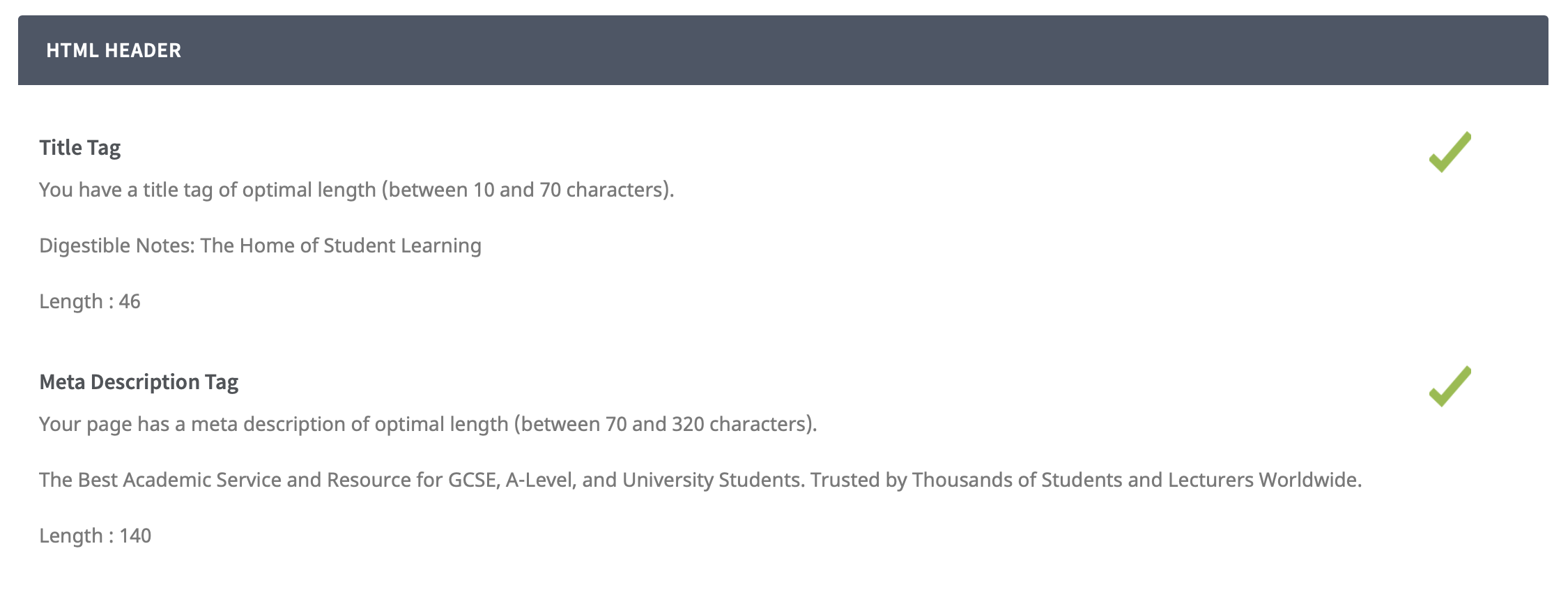

A free and very simple tool to use to analyse these types of tags and other important elements is Seotimer.

Architecture

Web architecture is another important factor. The way in which a website structures its sections, groups URLs, and links URLs are all crucial SEO points.

Site architecture can affect SEO on two levels. On the one hand, it influences how users understand the navigation of the website. On the other hand, the search engine finds it easy to crawl and understand a website with a logical structure.

Web architecture is not just about structure, it is also important on a semantic level too. That is to say, the keywords that are targeted on specific pages is an important thing that should be considered. If you are targeting keywords randomly, without prior thought, it is likely you could make some SEO improvements.

If you have particularly important pages on your website then they should be easy to find, have a number of internal links linking to them, and also not be to deep inside your website with respect to your home page (i.e. it should take the least number of clicks possible to get to them, ideally one or two).

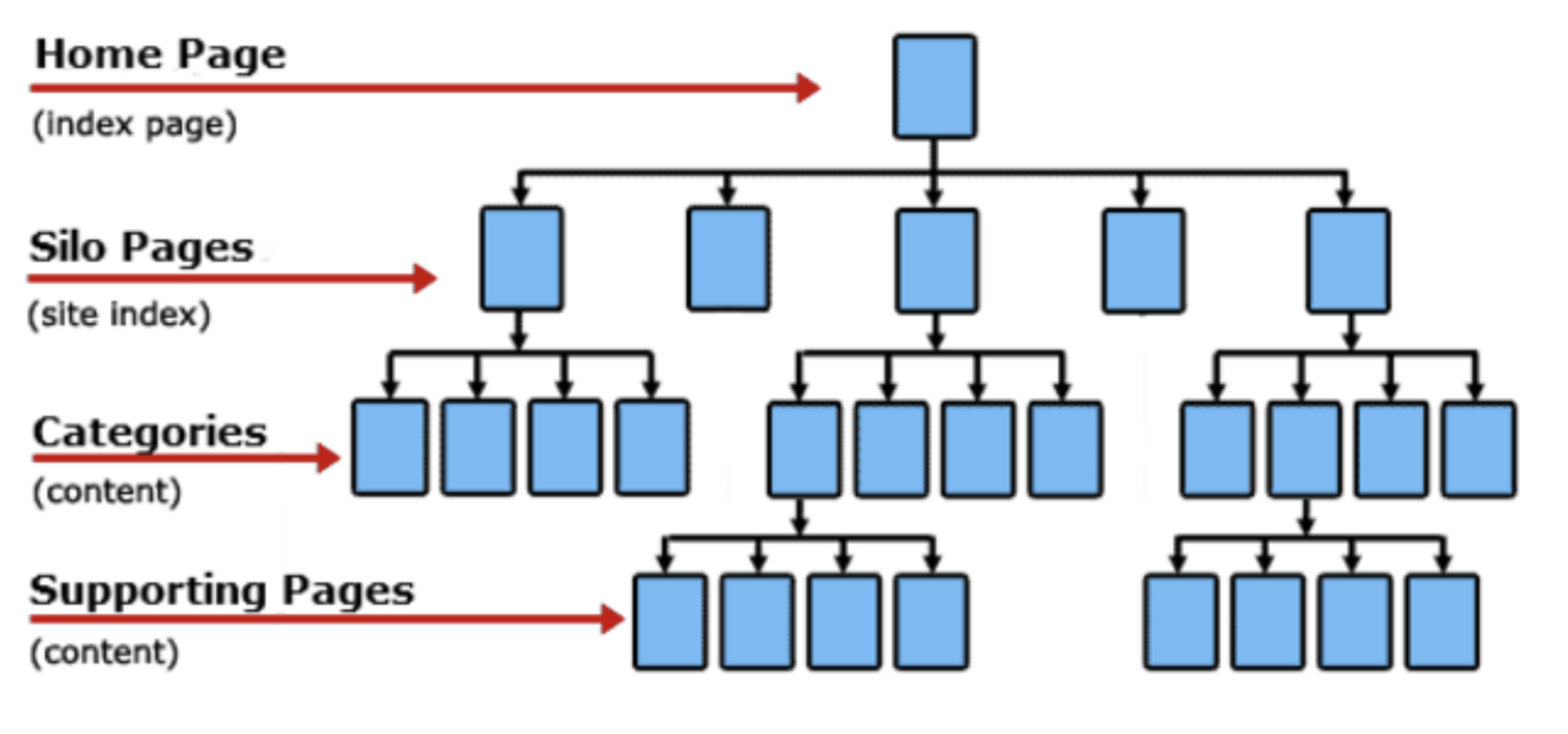

Below you can see an image which shows a website's horizontal architecture with just a few layers of navigation, making it simple and structured to access any page on the site:

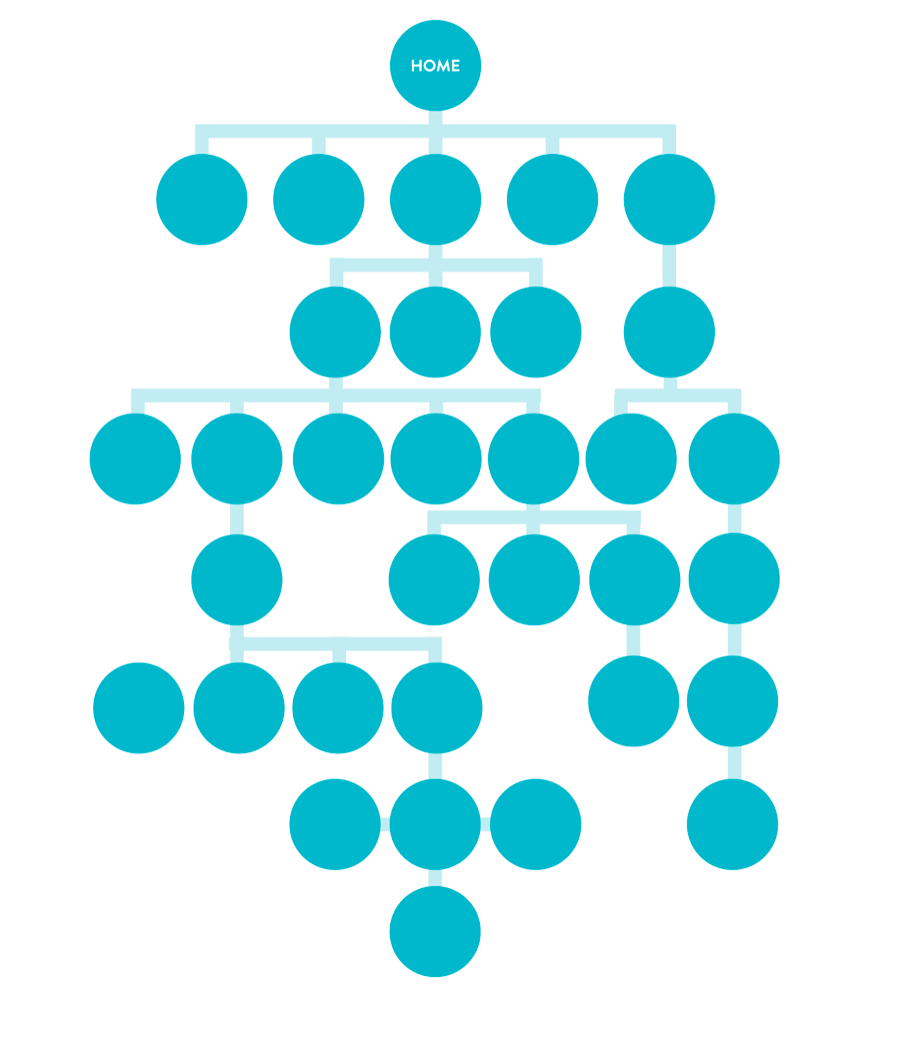

If your website architecture is too vertical, as seen below, you can see how this can lead to confusion when a user or a search engine tries to use your website:

If you believe your website is looking a bit vertical, then you may want to consider restructuring the website to make it more friendly. If you need any help with this just leave a comment below and I will get back to you!

You can easily analyse the web architecture of a website and its directory structuring with a tool like Screaming Frog.

Internal Links

Internal linking is another important factor for SEO and should be analysed as part of your SEO Audit. If you have particular pages on your website that you want to be seen as more relevant by search engines, then you should be providing that page with many links.

As seen above, internal linking is also part of the web architecture, and so it helps to organise the structure of your website and facilitates the ability of search engine bots to crawl your website. Furthermore, a good internal linking structure encourages people to look at more pages on your website, thus reducing the bounce rate.

To analyse your internal link structure try using the Google Search Console.

404 Pages

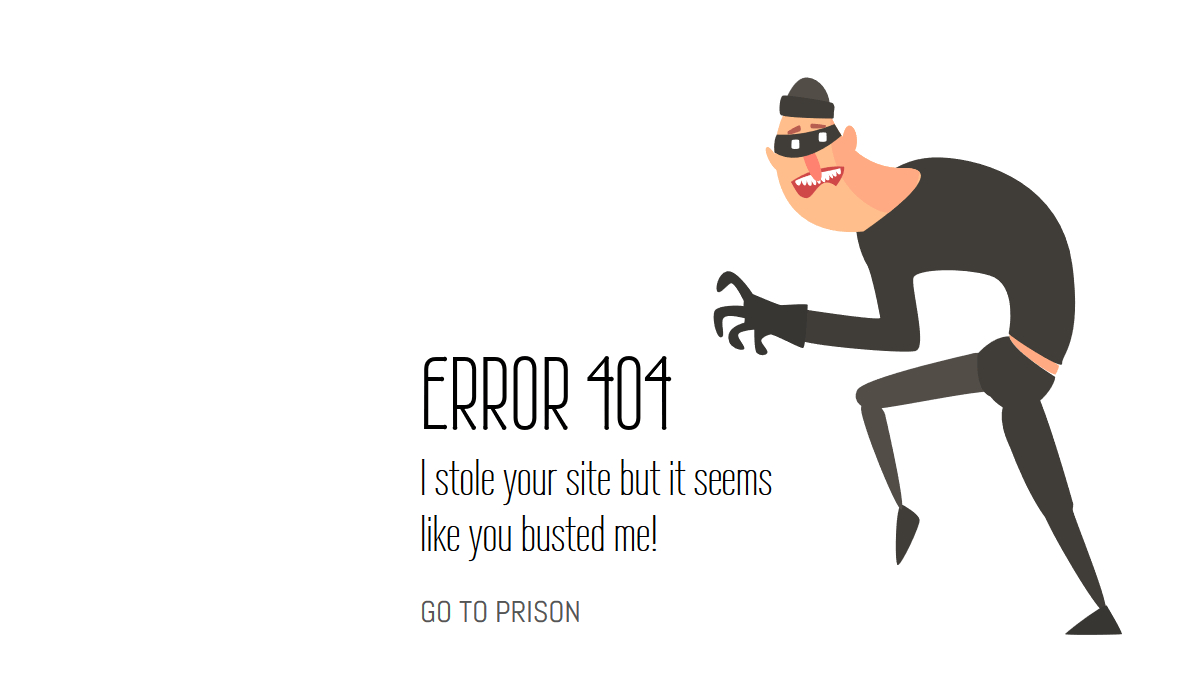

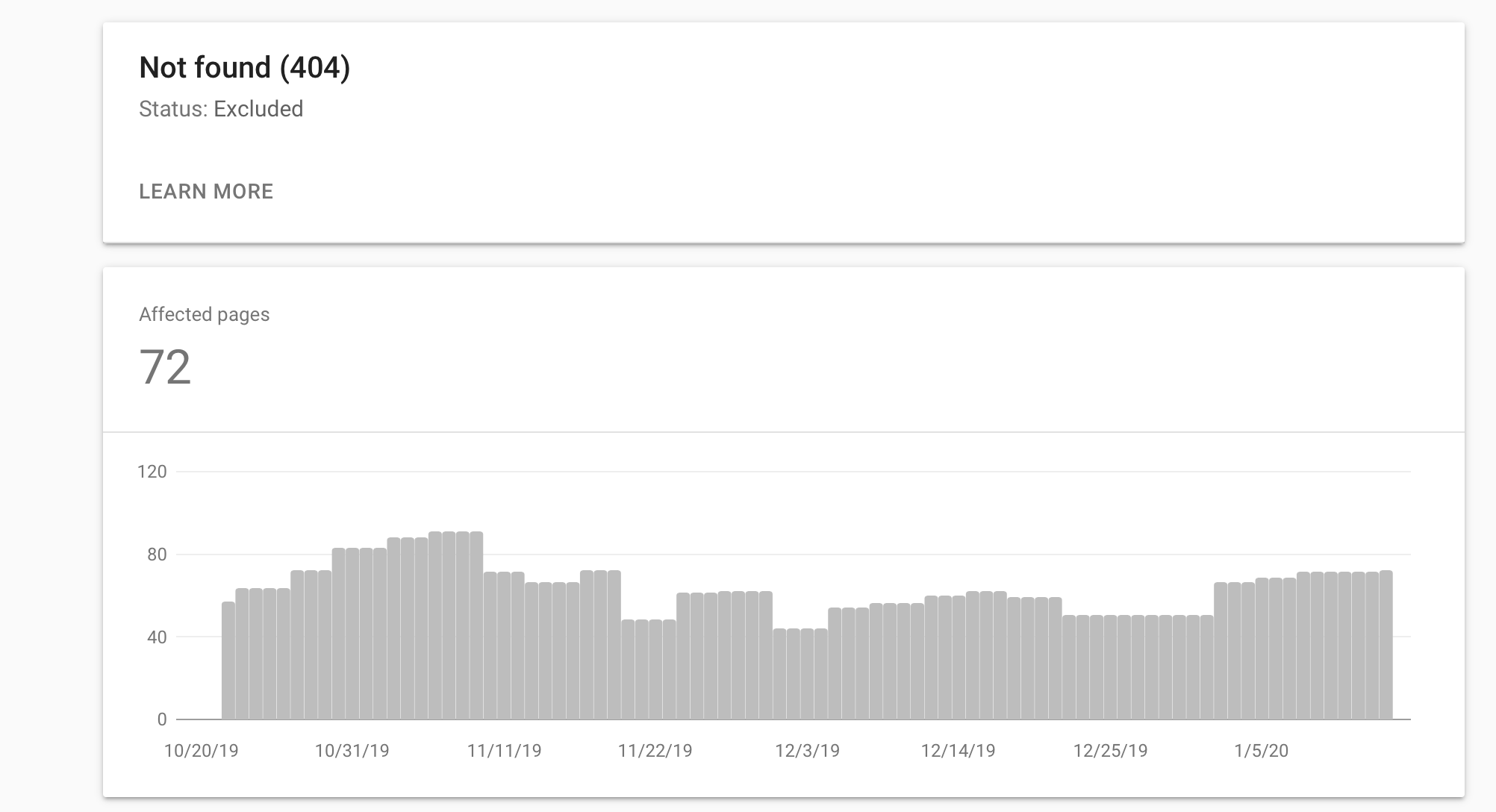

In your SEO Audit, analyse the 404 errors that are presented on your website. Contrary to popular belief, 404 errors aren't actually penalised by search engines and aren't as problematic as you may, at first, think.

In short, a 404 error is a URL on the site that no longer exists, a URL that has been incorrectly written, or a URL that has been poorly linked from another website, meaning instead of seeing content you see a 404 error page. You can also customise this page to your liking.

The main problem with 404 errors is that if a user is trying to access a specific piece of content and a 404 error message is shown, the user will become frustrated and, as a consequence, leave the site. Therefore you will lose a sale, a share, or a recommendation. In short, if the URL throws a 404 error, there will be no interaction from the user and no conversion.

Another problem with 404 errors is that if you have too many of them, this would force the search engine to use up its crawl budget unnecessarily. That is to say, a lot of your website won't be tracked and ranked as the crawling bots would waste time trying to crawl URLs that don't exist.

Fixing 404 errors:

- Simply make a 301 redirect from the URL throwing the error to the URL you want the user to go to. If you're using a website builder, like Wordpress, you could always use a free Simple 301 Redirects plugin.

Broken Links

Broken outgoing links (links from your site that point to another website that do not work because the URL is broken or no longer exist) are bad for the user (since they cannot access the content they want) and for Google (since it conveys the idea that your website is poorly optimised and provides low quality content).

Again, if you have many broken links, you can use up the crawl budget, meaning less of your website will be visible on Google.

However, linking to relevant sites and pages with similar topics is a positive when it comes to SEO, as it provides value to users, meaning they are more likely to come back to your page again.

To detect broken links on your website you can use a free tool called Broken Link Check.

Redirects

The most common redirects are 301 redirects (permanent) and 302 redirects (temporary). A redirect serves to send user traffic from an old URL that no longer exists to a new one. The redirection, if permanent, also transfers authority from the old URL to the new one.

In the SEO Audit you should analyse the redirects that have been implemented at the domain level (complete redirects from an old domain to a new one) and of specific URLs.

Redirects can slow down the load time of a web page, because you are forcing the browser to check for two separate URLs. In turn, a slower page load time can impact SEO negatively.

URLs

Each URL on each page of your website has something known as a slug. The slug is the part of the URL that follows the domain. For example in the URL example.com/slug, the slug is the part next to the domain.

It is recommended that the slug contains a keyword or key expression (with words separated by hyphens) that you want your page to rank for. Try to keep the slug short and avoid using strange characters, numbers, and unnecessary words (e.g. conjunctions, articles, prepositions - although some people argue that using prepositions, for example, are important otherwise you can change the meaning of the search).

Images

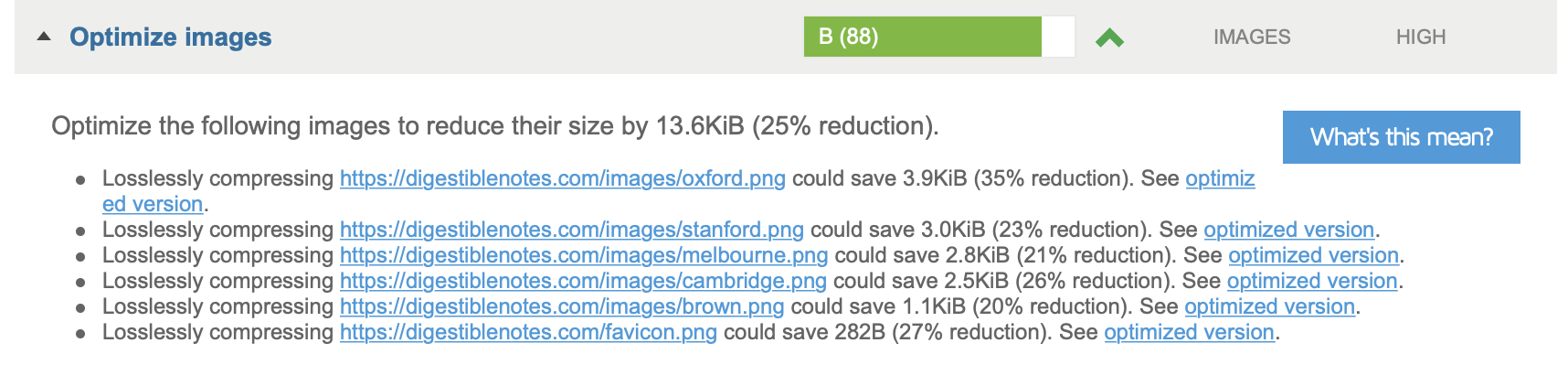

The first thing you should consider is the resolution of the images. A medium-low resolution is probably sufficient. If you are using high-quality images then this will use up server space and will take longer to load. Also, the size of the images is an important consideration. For example, if an image on your website is going to be in a container 300 pixels wide and 300 pixels high, then upload an image of that exact size.

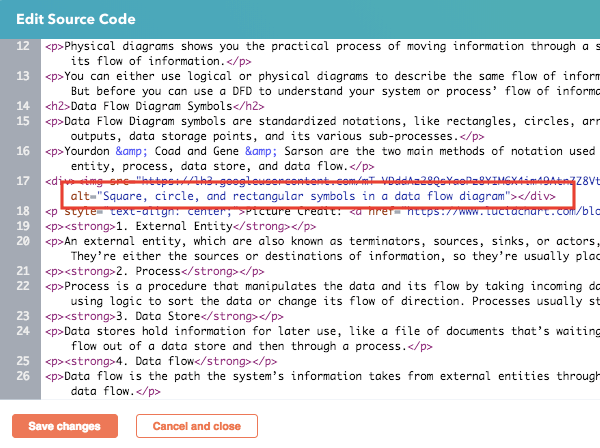

As Google cannot specifically understand what is contained in an image, it is important that you fill in the associated text elements of an image. The most important of these text attributes is the alternative text (alt), as this allows Google to understand what the image is showing.

Another aspect that helps with SEO is the name you use for the image file. If the filename includes the keyword you wish to rank for, the search engines are going to have a better understanding of your page and likely to push you to the first few pages of Google.

Finally, the context of an image can also help. That is, the text around an image lets the search engines understand the thematic relevance of the image.

To see if your images are optimised properly you can use GTMetrix, which provides performance data on a number of different page elements.

2.2 Keywords

Focusing SEO actions around the right keywords is crucial. If you are trying to rank for the wrong keywords that are irrelevant to your business, too difficult to rank for, or have negligible monthly searches, you will waste a lot of time.

Therefore, it is absolutely essential that before deciding on the content you want to produce and organising the structure of your website, you should undertake thorough research into the keywords you wish to target.

From this, you can develop your content strategy and organise the website, so the main section and articles are based around the keywords you have chosen.

A website that has done no keyword research will likely rank quite low in search engines and, even if they do rank high, it will probably be for an irrelevant search term. This is a poor strategy and will lead to few positive results/conversions.

Optimising keywords for SEO purposes:

- Write naturally and don't force keywords into your writing

- Place the keywords in relevant places across the page (e.g. in the titles, headings, alt tags, first paragraphs, etc.)

- Write at least 300 words on every page. However, your goal is to provide genuinely useful information to your readers, so if that means you write less then that should be okay.

- Write synonyms to your keywords in the content, because although you are using different words to the keywords, it will help capture wider searches from users who are looking for your content but haven't used your exact keywords.

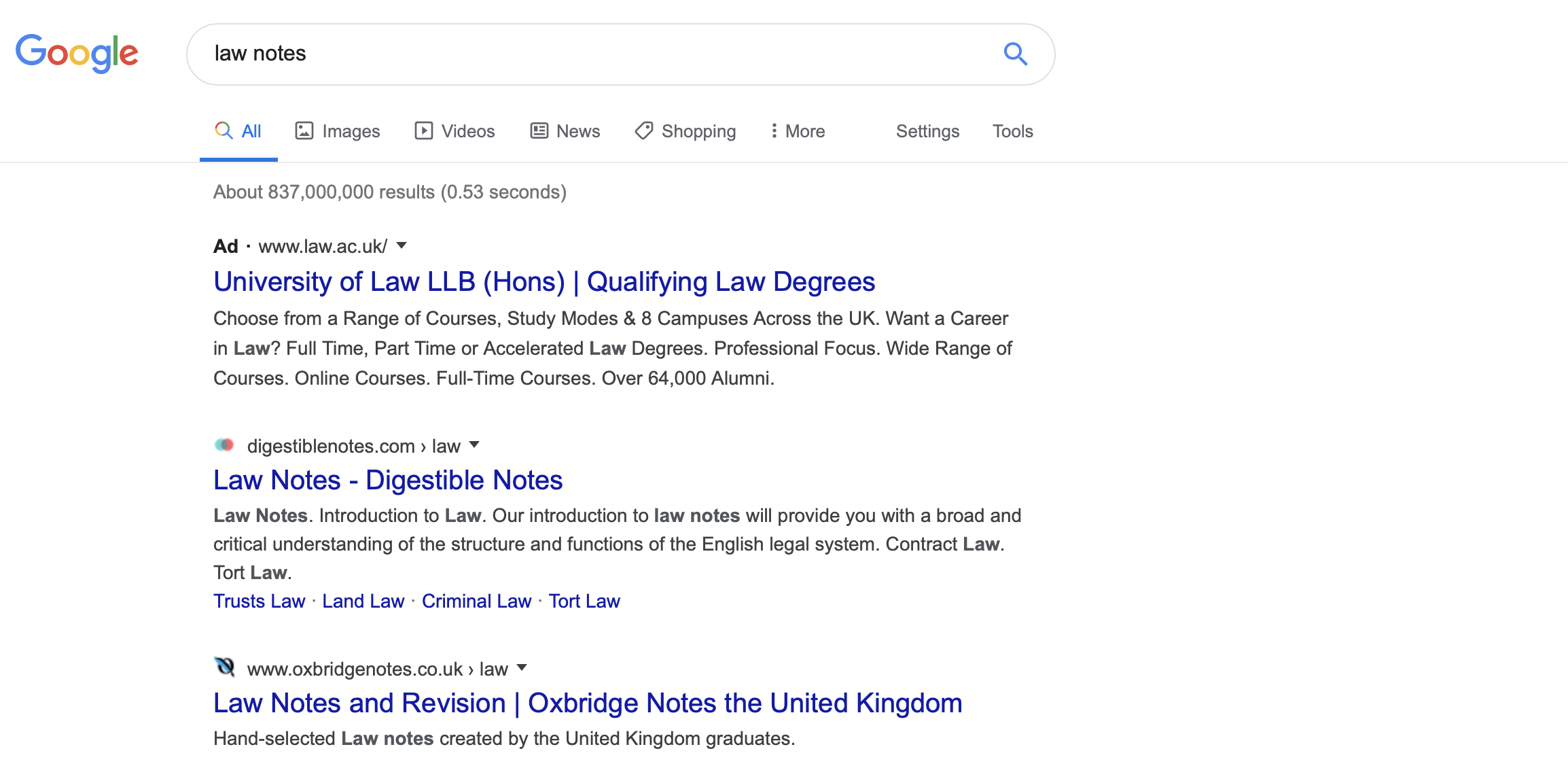

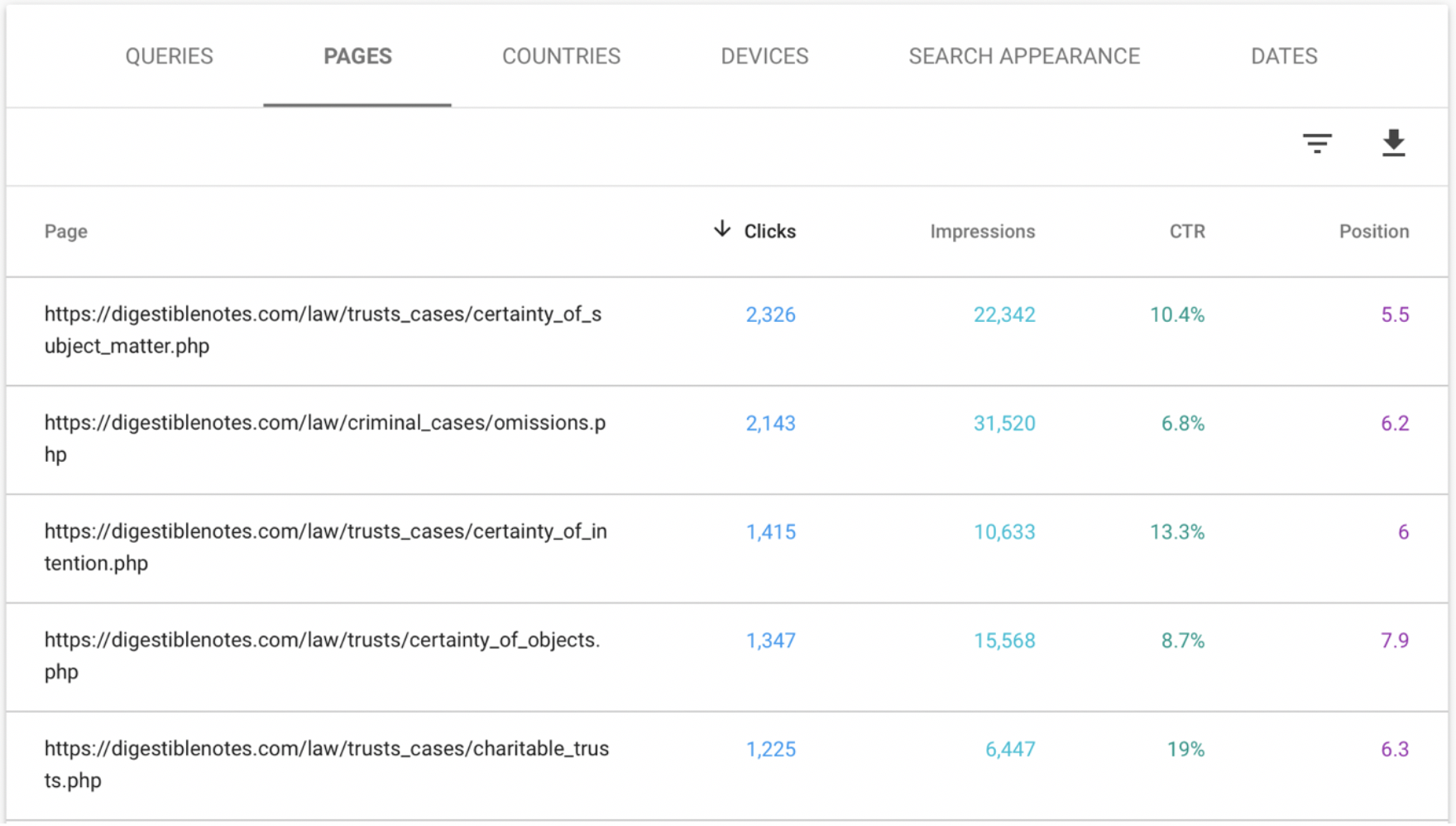

2.3 CTR

The Click Through Rate (CTR) represents the total number of times your site was clicked in comparison to the total number of impressions it received as people scrolled through the result pages (SERP). If you detect that your site has a low CTR, you should consider whether or not the way you present yourself is engaging or interesting enough. Remember, the information shown on the result pages is the information included within the meta description and title tags, so think about changing this up if the clicks to your website is a little low.

How to improve the CTR on the SERP:

- Use attractive titles with valuable words and calls to action

- Write attractive meta descriptions that accurately explain the content on the page

- Use prominent and attractive visual elements in your titles and meta description to get the user's attention, such as emojis, special characters, etc.

- Use rich elements (rich snippets) whenever you can, to provide more specific and specialised content to the user and to stand out visually (e.g. reviews, star ratings, etc.)

- Remove the date of your content if it is timeless, to prevent users to think your content is obsolete

- Improve your copywriting technique

- Look at the results pages to see what your competitors are doing and get inspiration from that (but do not copy them!)

- Offer content that is valuable to the user

With the Search Console tool you can easily analyse your CTR for all the URLs on your website. In our post about the new version of Search Console you have a step-by-step tutorial on how to monitor and optimise your CTR through the performance functionality.

Block 3 of analysis. Inbound links / Domain Authority

3.1 Inbound Links

Incoming links or backlinks (links that are implemented on other websites linking back to your website) are unbelievably important when it comes to SEO.

Since Google was born, they have considered that backlinks are most important/relevant when they come from domains that are high quality. Therefore, even if you get lots of backlinks you want to analyse where exactly these backlinks are coming from and ensure these sites have a certain authority and the links are naturally implemented into their site. A healthy link profile with references from many relevant sites is ideal.

Sometimes you can get a number of undesirable inbound links for reasons that are completely random or due to some external attack on your website. So even if you haven't actively sought to build this backlink profile, it is still worth spending some time checking that any links coming to your site are from authoritative domains that talk about similar content to you. Again, this can be analysed using the Google Search Console.

3.2 Domain Authority

The authority, relevance or popularity of a domain can be analysed a number of ways and using a few different tools. IN general, a domain with authority is one that has good links, signs of good SEO, and has useful content.

To assess the relevance of a domain, Google uses something called PageRank. It's impossible to know the exact criteria for determining what they deem to be most important ranking factors, but the following tools do offer some assistance...

You can measure your domain with the Moz Domain Authority, which is great for determining the health and quality of a domain. You can also used paid taels like Ahrefs, which is pretty reliable at estimating a domain's quality/authority.

However, you should keep in mind that these tools are all provided external to Google, and are simply best estimates on a set number of criteria. Do not take these results as something absolute, but rather as an approximate indication of the current methods of determining domain authority and quality.

Block 4 of analysis. Performance / Adaptability / Usability

4.1 WPO Performance

In your SEO Audit, you should also analyse the performance of the website. That is, we need to make sure the pages on your website load quickly. This is an important factor because users, today, are only happy with using sites that provide them with results quickly.

There is no specific loading time that Google defines as fast or slow, therefore your goal should be to try and load your webpages as fast as possible. To make your page load faster isn't always straightforward, and there are numerous loading factors that can influence it.

1.) Hosting

The server where you host your website is one of the main factors that determines how fast or slow your site loads. I recommend you sue a host for your website that has a good reputation for good performance and security, as well as great support in case you run into any problems.

Changing from a bad host to a good one is one of the quickest and easiest actions you can take to improve your site's loading speed and is one I always check with clients before undertaking any other SEO optimisation methods.

2.) Template and plugins

If you are using a content manager, Wordpress or something similar, it is important to consider what template/theme you are using, and any other plugins you have added to the site. A slow template will make it difficult to optimise speed and particular plugins will slow your site down too.

3.) Images

Another simple thing to resolve has to do with the images on your website. Sometimes a website is slow becomes the images have not been optimised properly (as we discussed earlier). Make sure you don't upload images that aren't too large and have a resolution that is higher than necessary. You can use a number of tools to ensure your pictures are cropped to the exact dimensions you need.

4.) Cache

Installing a cache system is one of the most recommended methods to improve site performance. For this, there are plugins such as WP Rocket (paid) and Fastest Cache (free), which do wonders when it comes to site speed. In essence, this enables static elements to be served more quickly because they are already preloaded.

5.) Use a CDN

A Content Delivery Network (CDN) improves the delivery of data from your website to the user thanks to the fact that it uses data centres distributed across the globe and offers a number of improvements to page loading speed.

6.) Minimise the code

Reducing the amount of code your website has by cleaning unnecessary code or reducing the spacing can help your page to load faster too.

7.) Optimise the database

If your website is pulling information/data from a database, this can take a long time if it is not optimised properly. Try cleaning the database up every now and again to ensure it can work as efficiently as possible.

8.) Unnecessary extras

Avoid implementing too many different things on your website, especially if they aren't that important. keep in mind that every time you add a social feed, or more video content, and insert a new plugin, your site can slow down.

4.2 Responsiveness

Google analyses whether or not websites are responsive, which impacts how it should be ranked on the search results pages. In an SEO Audit you want to analyse if the website is accurately changing depending on the screen resolution and the device used (e.g. on desktop, tablet, smartphone, etc.). Making sure your website is responsive is not only good to rank well on Google, but is also important for the user too.

Remember, a website that is responsive will ensure that users that come to your site are happy and enjoy your content regardless of the device they use. This will lower the bounce rate, increase CTR, and improve the time they stay on your page, which all influence your SEO score positively. If, on the other hand, users come to your site and have a negative experience due to content not being displayed properly on their device, they won't interact and definitely won't convert.

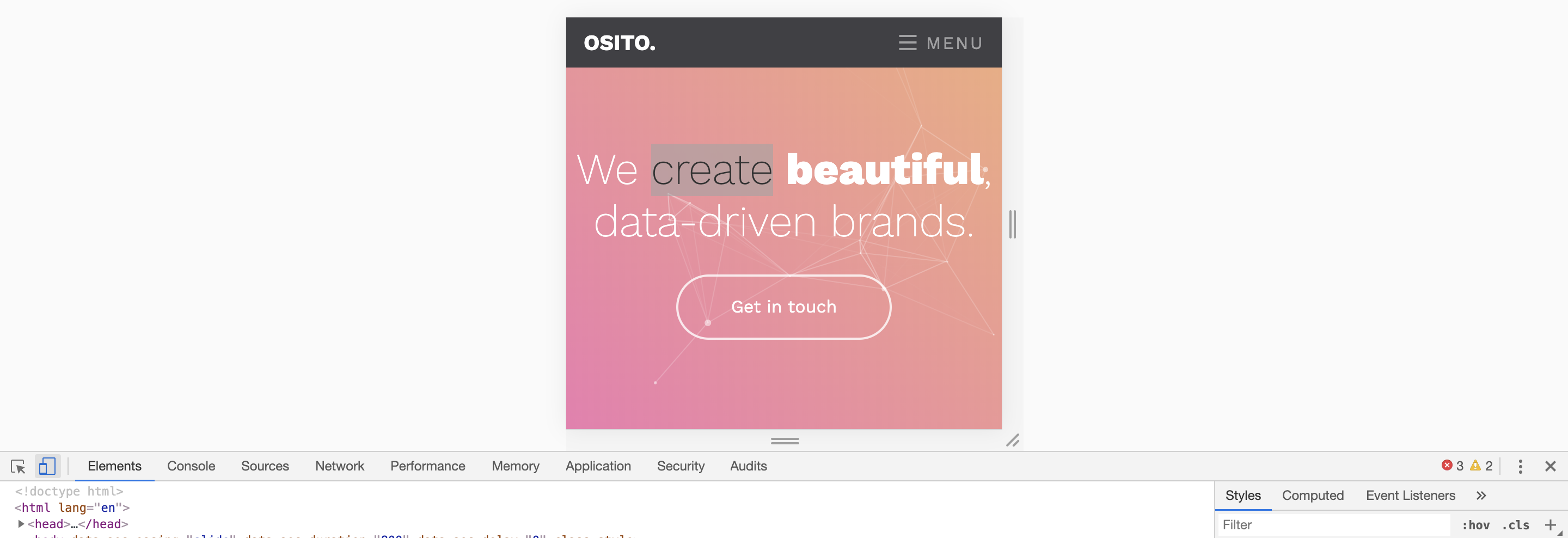

Analysing if a website is responsive

You can do two things to check if your website is responsive. The first, is to check what your website looks like on different devices that you have (e.g. your phone, laptop, and tablet) and see how your content behaves on each. The other method is to use a desktop application that simulates various screen sizes, brands and models of smartphones, tablets, laptops, etc.

The best way to do this is to use the inspect command on Google Chrome, which lets you preview how your device would look on different devices. To do this, right click on any area of your website, then click inspect, and click on the mobile device icon in the top left of the pop up:

4.3 Usability and User Experience

What is usability?

Usability is how easy it is for a user to understand and use a website. In other words, a usable website is one in which the user knows how to use the functionalities without serious problems and can easily find their way around the website.

👍 A usable website doesn't require too much explaining

👍 A usable website has text that is easily readable (good font and colour)

👍 A usable website doesn't have popups or other invasive elements

👍 A usable website can be browsed across all browsers and screen sizes

👎 An unusable website is not easily understood or easily accessible by the user

👎 An unusable website works slowly and is difficult to navigate

👎 An unusable website displays elements that interrupt browsing or that annoy the user

👎 An unusable website has text that is difficult to read and has little contrasting colours

👎 An unusable website isn't responsive and won't work properly on certain browsers and screen resolutions.

What is user experience?

User experience refers to the degree of satisfaction or dissatisfaction that the previous usability factors have generated in the user, prompting him to stay on the site, interact, perform positive actions for SEO and generation conversions.

Consequently, a positive user experience is not only beneficial for your brand and conversion rate, but also positively impacts SEO, as a satisfied user who understands the value of your site, generally engages in SEO friendly activities:

- They will stay on the site for longer

- Lower bounce rate

- Higher CTR

- More web page visits

- More shares and recommendations

- More form submissions

- More links clicks

- More conversions (leads, sales, etc.)

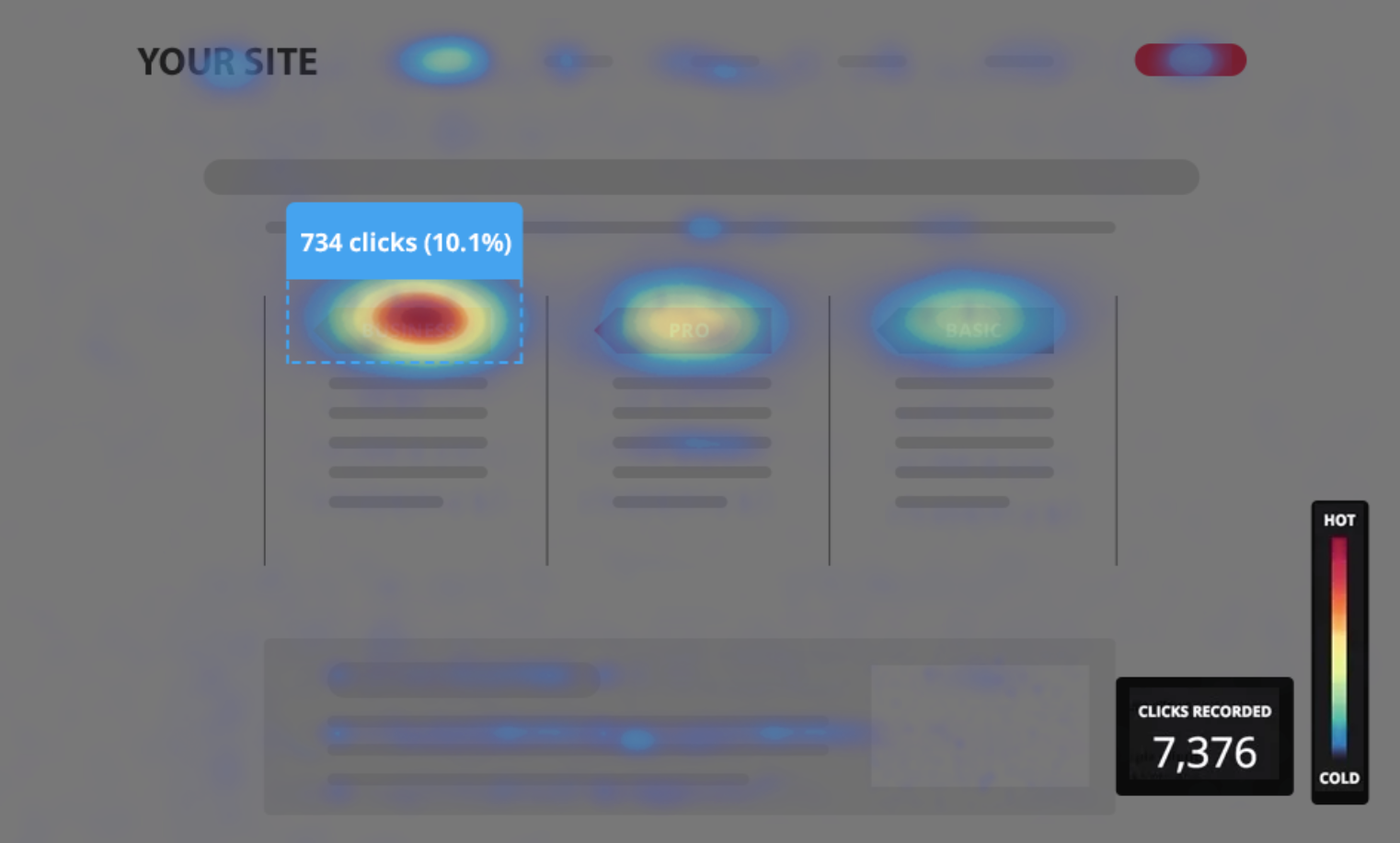

You can monitor the exact behaviour of users on your website with a tool like Hotjar, which allows you to say what parts of your site are clicked most frequently and other particular behaviour patterns.

Block 5 of analysis. Code and Labels

5.1 Code and Tags

In this section we analyse the SEO from a technical point of view. In essence, we need to look at the code that Google reads when determining how it should rank your page e.g. the HTML, Javascript, and CSS.

Firstly, we will look at the most language used on website: HTML. The HTML code generates the visual structure of a webpage, and also uses meta tags to send information to search engines and browsers, as well as specific attributes within tags.

What are the attributes of HTML code that impact SEO?

Title

<title> </title>

This is probably the most relevant tag and must include the keyword you are targeting for that page. It is also the element that is shown in the title of each search result and placed in side each browser tab. Usually, the title tag will match the <h1> tag but it doesn't have to. Remember, the title tag is a meta tag, so it isn't actually visible displayed on your page itself, but it can be read by search engines.

Meta Description

<meta name="description" content="">

This tag defines the description that will appear on the search result pages. It doesn't have a direct impact on SEO, but it will influence the click through rate (CTR), as the description your provide for each page will influence whether or not people want to visit it. To optimise the meta description, fill it completely, with an attractive and persuasive description of the content, using rich elements where possible.

Headings

<h1></h1>

<h2></h2>

<h3></h3>

<h4></h4>

<h5></h5>

<h6></h6>

The title tags (<h1>, <h2>, <h3>, <h4>, <h5>, <h6>) are useful for two reasons.

On the one hand, they enable you to properly structure the page visually, as the header tags become smaller and less bold as you go from <h1> to <h6>. That is, the <h1> tag is usually very large because it contains the title and the <h6> tag is usually the smallest (although you could edit the CSS to modify this if you wish). A correct visual hierarchy helps the content to be more easily scannable (so the user can quickly understand the content), reducing the bounce rate and increasing conversions.

On the other hand, the <h> tags are relevant in SEO, and it is recommended you place important text in them e.g. keywords, synonyms to the keywords, etc. You should only have one <h1> tag on each page, which should contain the keywords you are targeting, and then use the other head tags for the page subtitles (although it isn't necessary to use them all).

Images: alt and title

<image alt="" title="">

The alt and title attributes help Google to understand the images semantically. Therefore, in the alt and title elements you must put a description of the image with the keywords you are looking to target, so Google has more information what you are trying to optimise for.

OG: (Open Graph)

<meta name = "og: title" content = "">

<meta name = "og: description" content = "">

<meta name = "og: image" content = "">

<meta name = "og: url" content = "">

<meta name = "og: site_name" content = "">

<meta name = "og: locale" content = "">

<meta name = "og: type" content = "">

Open graph tags add valuable information to social networks and other platforms about your content. Through the Open Graph tags you can define how you want your URLs to look when they are shared on social networks. The contents of a website usually generate greater interaction and clicks from social networks if they are well optimised, with attractive titles, explanatory and persuasive descriptions, and have quality images.

Twitter (card, description, title, etc.)

<meta name = "twitter: card" content = "summary_large_image">

<meta name = "twitter: title" content = "">

<meta name = "twitter: description" content = "">

<meta name = "twitter: site" content = "">

<meta name = "twitter: creator" content = "">

<meta name = "twitter: image: src" content = "">

In the case of Twitter, it has its own tags to display content on its platform.

Language

<link rel = "alternate" hreflang = ""> <html lang="">

The lang attribute is defined above the entire html document, and it is used to indicate the language in which the page is written. if the site has different language versions, then this attribute must be defined on each URL.

Hreflang

<link rel = "alternate" hreflang = "">

Hreflang is an HTML attribute used to specify the language and geographical targeting of a webpage. If you have multiple versions of the same page in different languages, you can use the hreflang tag to tell search engines like Google about these variations. This helps them to serve the correct version to their users.

Meta Robots

<meta name = "robots" content = "" />

The meta robots define whether a specific page of the site is to be indexed/tracked or not (using index/no-index and follow/no-follow values). If you give a page an index value, Google will index the URL. If you insert the follow value, then Goolge will track the link. The values are placed in pairs separated by commas, as follows: "index, follow" or "noindex, follow", etc.

Canonical Links

<link rel = "canonical" href = "" />

Links with a rel="canonical" attribute tell the search engine that this link is the main version of the page. So if you pages with similar, or duplicate content, you can ensure that Google only considers that page to be the important one for crawling and ranking purposes.

Link Title

<a href="" title=""> </a>

The attributes of the <a> link tag serves to provide additional information about the link. Using the keyword can be beneficial to enhance the positioning of the destination URL around that particular keyword.

Structured Microdata

Example: <div itemscope itemtype = "http://schema.org/Recipe" > ... </div>

Structured microdata are increasingly important for SEO purposes, as they help Google to understand the content of a page with greater precision, meaning they can offer users more accurate search results.

In other words, if you add structured microdata from Schema.org to a recipe on your blog, you can help Google to understand that the webpage shows a recipe. This helps Google to better understand your site and makes your site will look more attractive to users on SERPs, meaning you will get more clicks to your site.

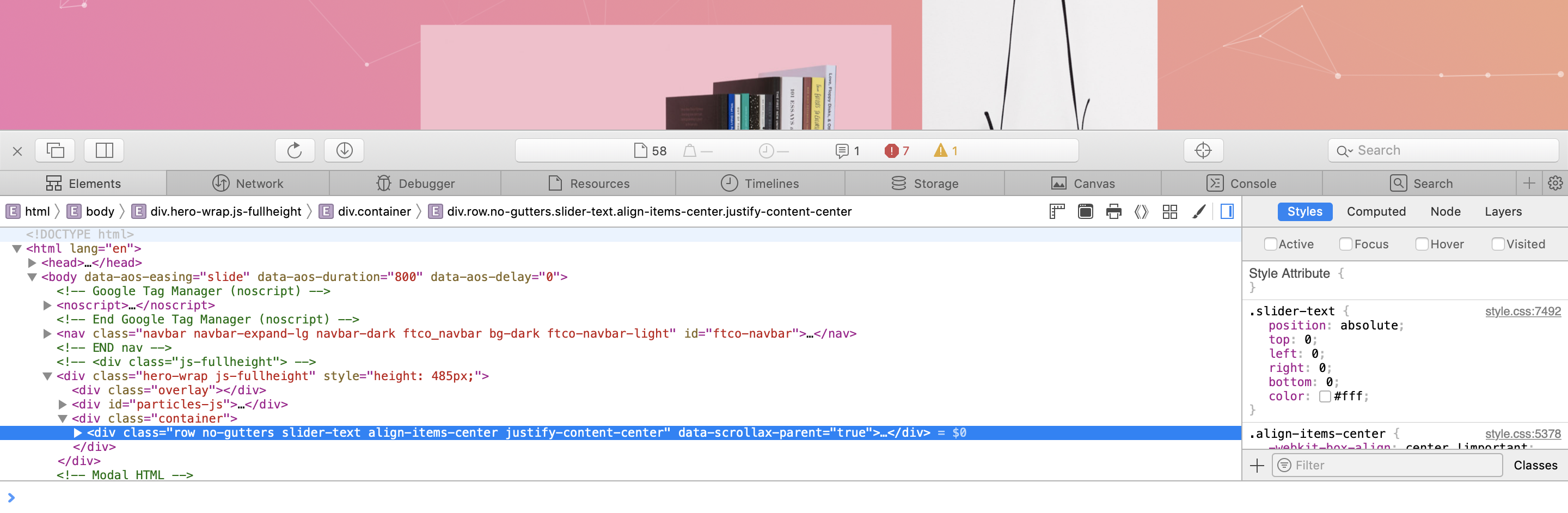

Checking Your Tags

There are numerous ways to check whether or not your page has all of these tags and elements in place. However, the easiest is probably to simply right-click on your page and inspect the source code or particular elements of that page:

Conclusion

If you like this content you’re going to love everything else I do. I want to provide you with unbelievable value so you, too, can achieve all your business goals. Let me know what you think of this post and I'd be happy to help any of you guys who may be struggling with your own SEO. Also check me out on Instagram, YouTube, and Facebook. 😁